Page

How to Submit and Update an Assignment through Moodle

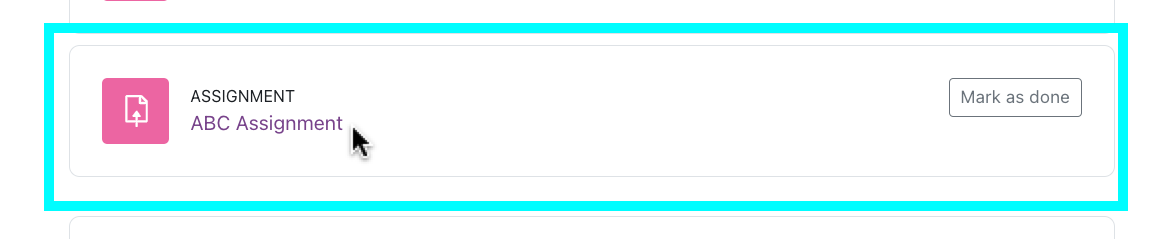

- Click the link for the assignment. Your instructor will determine what types of files, how many files, and the file size(s) are acceptable for each assignment.

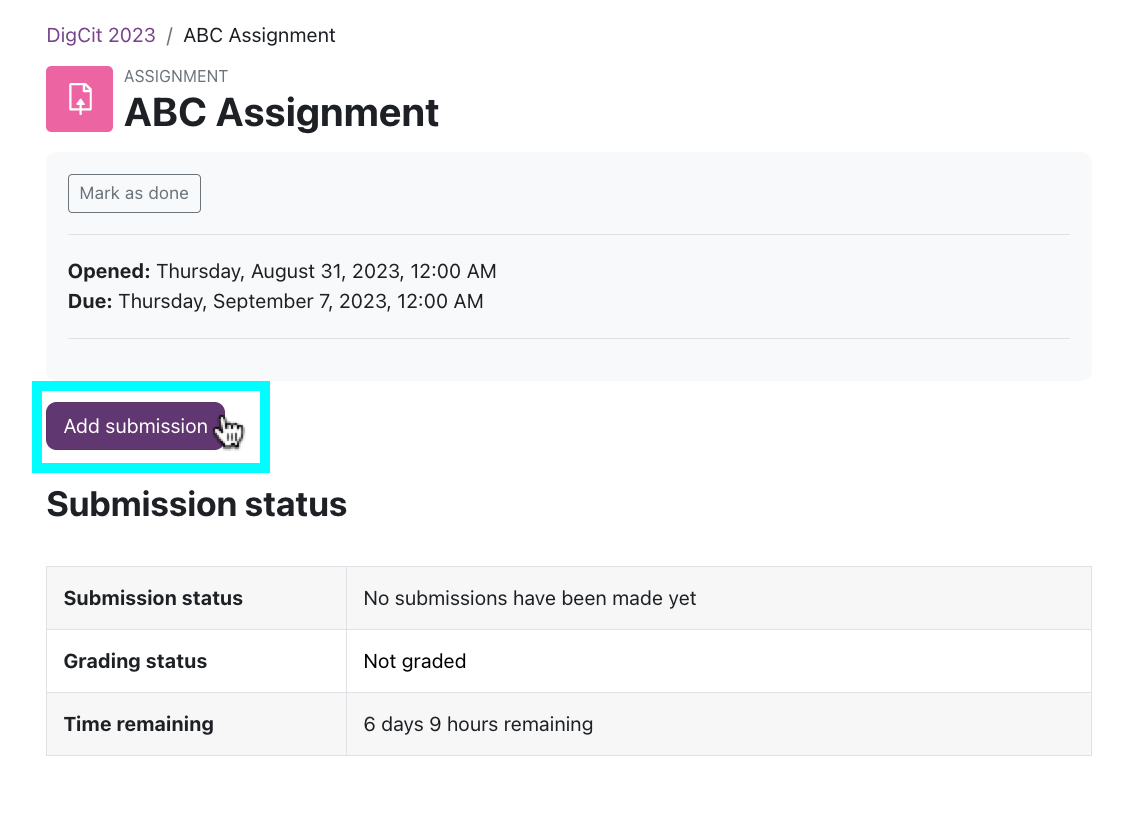

2. Review the assignment and due date.

- Depending on how your instructor set up the assignment, you may get locked out of submitting anything once this due date & time passes. Submit on time!

3. Click the Add Submission button.

3. Click the Add Submission button.

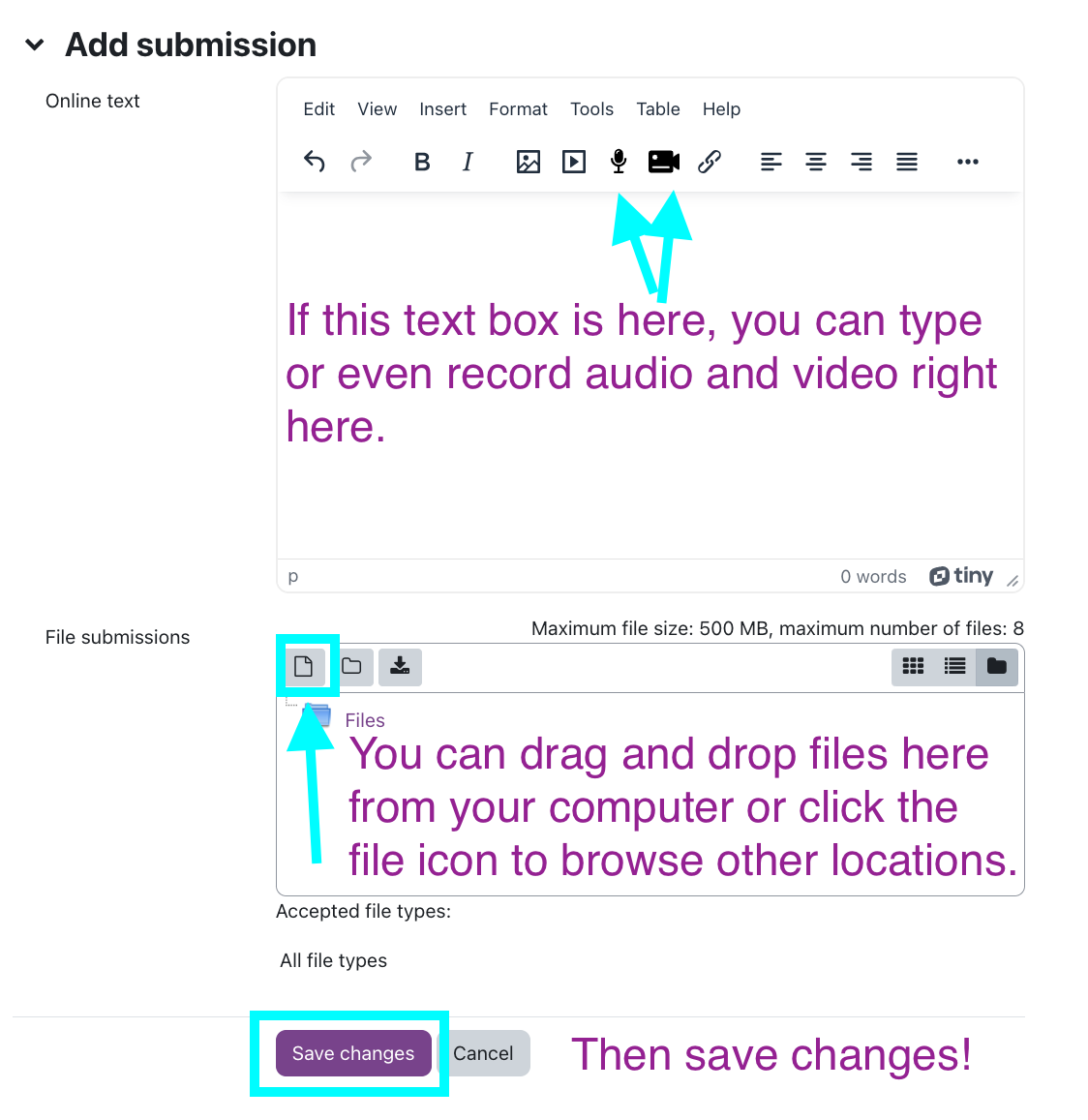

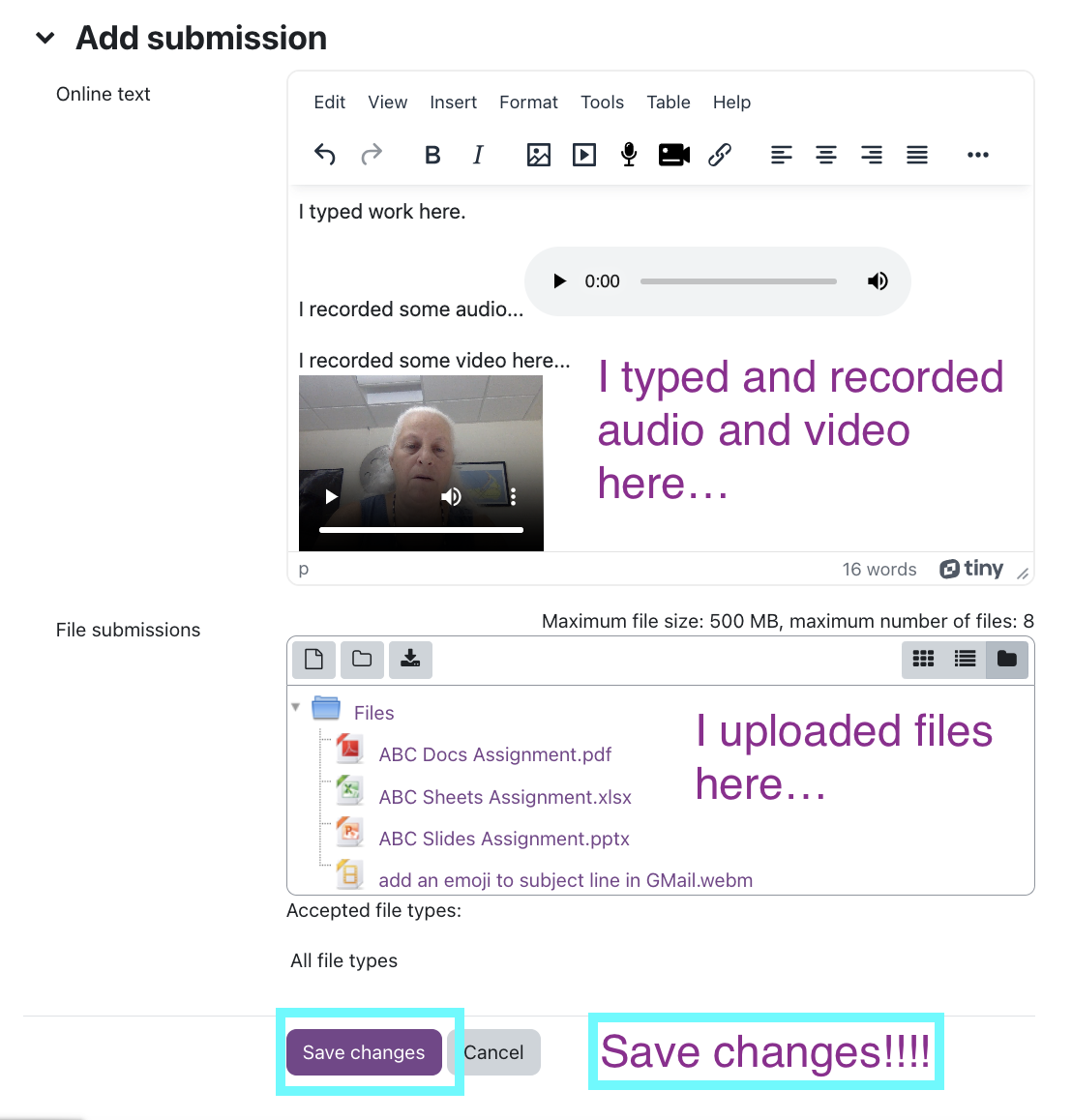

4a. Depending on how your instructor set up the assignment, you may or may not have a "Online text" box for entering text directly. Add text as appropriate for the assignment.

4b. Upload a file (if you're submitting a paper, for example) by dragging and dropping from your computer into Moodle.

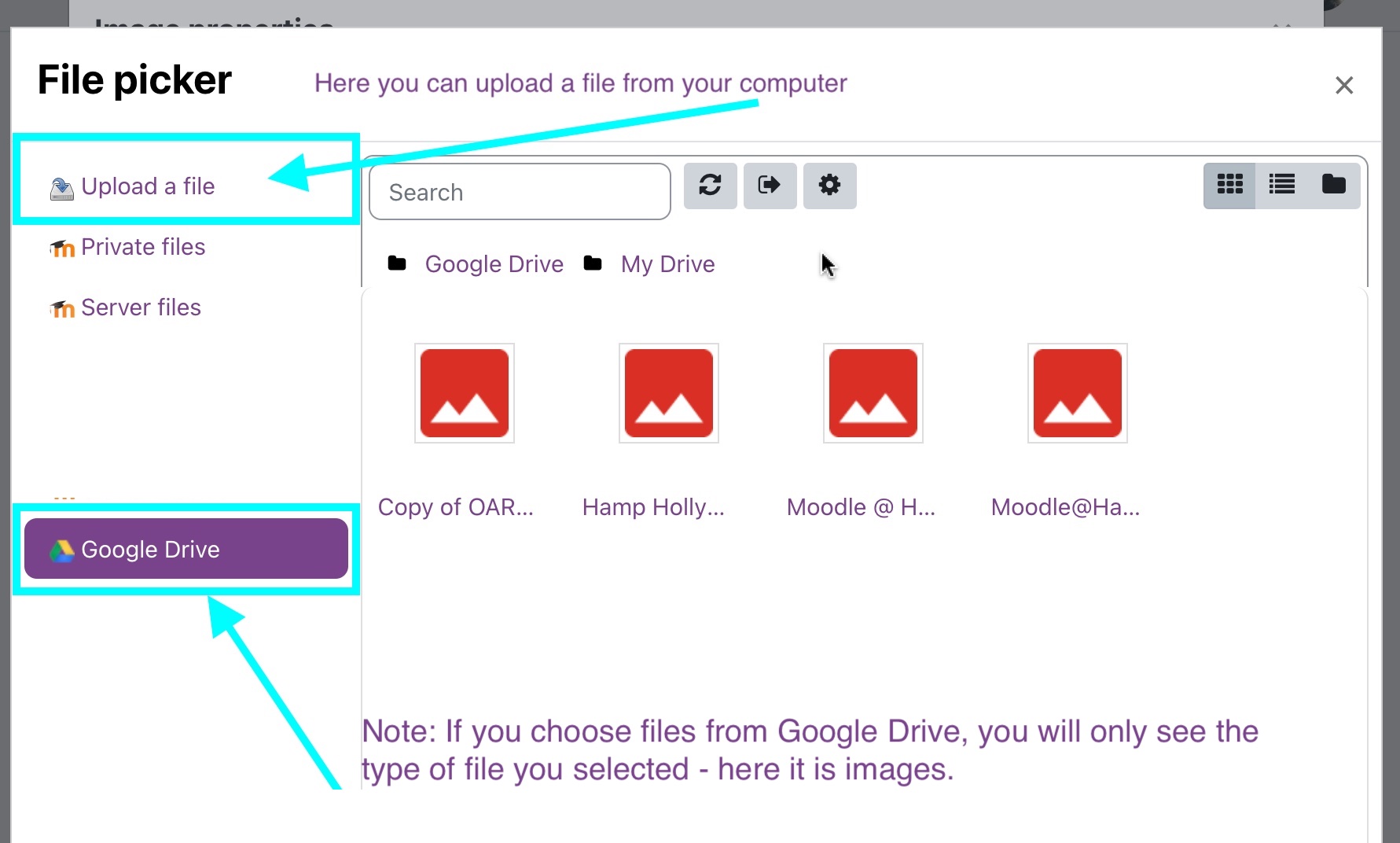

4. If you want to upload files, choose the location - your computer, Google Drive, etc.

5.I typed, recorded audio and video in the first box. Then I uploaded 4 files in the second box.

Click Save Changes.

6. You can go back in and edit the file if needed.

7. If you edit anything, hit "Submit assignment button" AGAIN!

All set!

Last modified: Friday, September 1, 2023, 8:38 AM